Is the 13900k a game changer for MFS?

just wait for independent third parties to benchmark the CPU on MSFS. Really no point guessing.

Would it be a gamechanger if you have I.e. 15% more power compared to your actual setting? Well don’t know…guess it will be noticeable and you can measure it, but a game changer??? Guess not!

wondering if it can surpass the 5800x3d

They are almost doubling the L2 & L3 cache…

I mean we all saw what a big jump in cache did for the 5800 3D…

Plus a bump from 5.2 to 5.8 ghz…

I honestly think it will be around a 15% increase in performance.

Marc

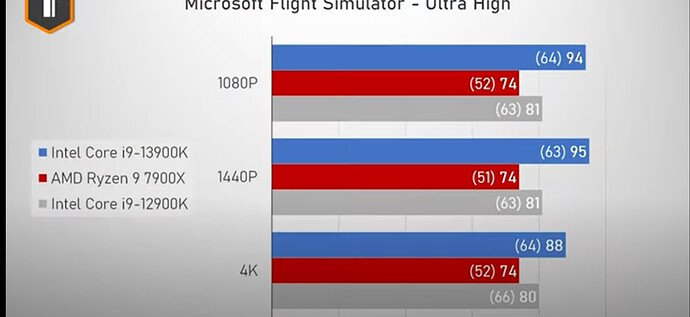

Some benchmarks pointed out that 5800X3D is already surpassed by i9-12900K with 4K gaming.

I would hope the 13900k can surpass the 5800X3D. The upcoming Zen 4 with V-cache (7800X3D, etc) will compete with the 13900k.

This is a misleading statement. The benefit of huge L3 cache is less pronounced on some titles, and at 4K, you’re usually GPU bound anyways. CPU gaming benchmarks are done at 720p and 1080p for this reason.

So, who has one to test?

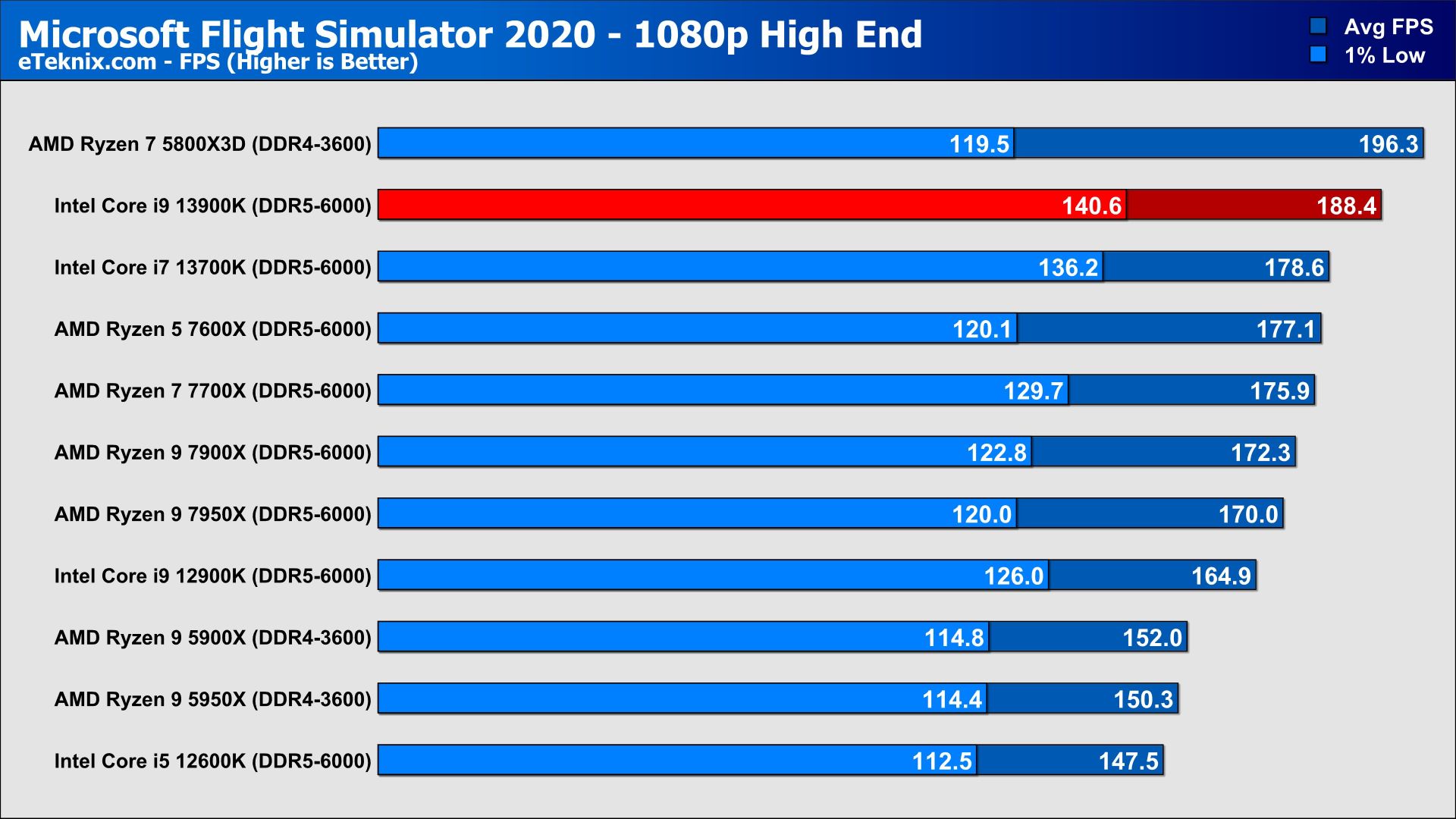

Would be interesting to see how much of a penalty DDR4 is in MSFS. Digital Foundry (eurogamer) is touting a 14% increase in performance in MSFS (disclaimer: unlikely to be complex add-on tested) from a 13900k over a 12900k with DDR5-6000

Sounds real good, ddr5 6000 is a nice bump

Most likely just a different test spot that does not load the cache as much. Also Ultra vs High End settings. All CPUs in this test score much higher FPS overall.

Imho that benchmarks are pointless. Those who can buy an high end cpu wouldn’t neither play FHD nor wouldn’t they pair them with an budget gpu. On the other hand one of those with fewer opportunities wouldn’t mix components in an opposite way just as little.

What use is a benchmark in FHD just to prove that under a certain unrealistic scenario one or the other personally preferred component here or there is just better or not?

For me it would be much more interesting to know which cpu is ahead in VR with a 4090 and a G2 and maximized settings f.e…

The benchmarks should be based more on everyday conditions and compare different realistic PC configurations.

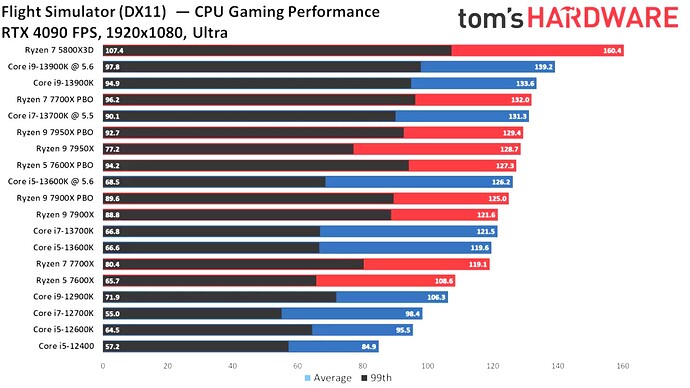

While I agree that seeing a full resolution test is also beneficial these 1080p tests do actually provide some very useful info for us by isolating the cpu from interference from any graphics bottlenecks. A realistic full resolution use case will usually be gpu limited and mask the true performance of the cpu.

The most important bits the 1080p tests show are the 1% lows in fps and more importantly the difference between max fps and the 1% low for each cpu. That right there is your smoothness metric. If the gulf between max fps and the 1% is huge you can expect stutters in the sim to be pretty severe, regardless of resolution. The closer they are together the more responsive the cpu will be to sudden scenery loading demands and the smoother your flights will be.

If you run the sim with any kind of fps lock (vsync on or MR in VR) the 1080p charts give a direct read on how much of an unexpected hit each processor can absorb per frame in a worst case scenario before stuttering (think approaching and loading in big addon airports etc).

This is shown nicely in the difference between the 13900k and 5800 X3D. The X3D is a remarkable processor but it isn’t actually that strong under the hood. Its performance comes from cache hits. If those are steady and the demands on the cpu are moderate it really flies, but when it gets hit with a really big demand and a ton of cache misses then it can fall pretty hard due to its lower clock speed and weaker IPC. Stutter resilience and recovery has typically been an intel strong suit due to their generally higher clock speeds.

The 13900k’s power demands though… oy. ![]()

These tests are about pure CPU performance, nothing else. There is no point in testing CPUs against each other while the game is stuck at the GPU limit. To prevent this, tests are carried out in 1080p.

The CPU that performs best in 1080p also has the best performance “in VR with a 4090 and a G2 and maximized settings f.e…”. But it doesn’t bring you much, because the GPU is probably running at its limit at this scenario. Which brings us back to the beginning.

Got my 13900K up and running. Loaded the NYC scenario I’d saved from my previous rig, that had given me 40-42 fps on my 3080 and the 4090 without the frame doubling activated on the 10900K CPU. Same scenery gave me about 70-75 fps with the frame doubling active.

So I was disappointed to see that I was only getting 65-67 fps on the 13900K in the same scenario. What the heck had I done wrong in settings that I was getting a slightly slower frame rate on the system with the new CPU?

Then I went into settings and realize the beta opt-in wasn’t in effect on the new system, and I was running SU10. So that 65 fps was without frame doubling!

Heading out to dinner so will have to check the frame-doubled SU11 beta results later tonight, but definitely looks like the 13900K is a worthwhile upgrade for the CPU-bound if you’re running 10900K or earlier!

And back from dinner and under SU11 with the 4090, DLSS set to quality, and the frame doubler enabled, getting 120-140 fps over NYC in the Cessna 208. Awesome – nearly double the 10900K, much better boost than I expected.