I have limited knownledge on this subject, but from what I understand of the video, they use a SteamVR driver to inject Leap Motion input into StreamVR itself. This means that applications using the SteamVR APIs can see the Leap Motion as a controller. I believe that when you are using the “OpenXR runtime for SteamVR”, the OpenXR API calls are converted to SteamVR API calls. So when the application queries the controller input from OpenXR, it receives the input from the SteamVR API - ie the Leap Motion input.

As shown above (hypothetically), the OpenXR runtime is not really involved with any hand tracking. It passes the information through from SteamVR to the application. It just turns out that because the Leap Driver injected the data directly into SteamVR, it just works.

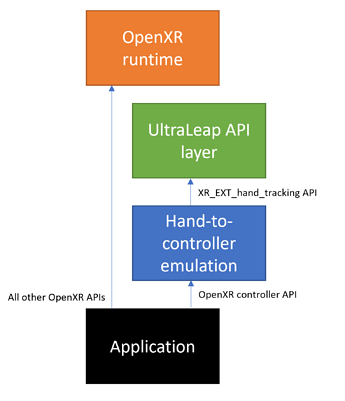

This is fundamentally different from what the UltraLeap API layer that you posted is doing. An OpenXR API layer is inserted between the application and the OpenXR runtime, in this case adding the hand tracking functionality through the XR_EXT_hand_tracking API. The underlying OpenXR runtime is not involved at all. The OpenXR runtime does not see the API layer.

When an application implements hand tracking via the XR_EXT_hand_tracking API, using the UltraLeap API layer will intercept the calls to the XR_EXT_hand_tracking API and respond to them:

But if the application does not make use of the XR_EXT_hand_tracking API, then the layer does nothing. The traditional controller APIs are still handled by the OpenXR runtime, which does not see the API layer anyway:

As said by the UltraLeap rep “we think that interacting with hands is fundamentally different to controllers”, and this is why the XR_EXT_hand_tracking API (exposing hand joints positions for all the bones in your hand) are completely separate and different from the controller input APIs in OpenXR (which only expose one position/orientation per controller).

When using an API layer, the underlying OpenXR implementation does not see anything. So it is not possible for the OpenXR runtime to access the hand tracking data. For applications that do not support input via the XR_EXT_hand_tracking API, and as pointed out by the UltraLeap manager, a third party “emulation” driver needs to be implemented. This could be in the form of another API layer that could be inserted “in front” of the UltraLeap API layer, and retrieve the hand tracking data via the XR_EXT_hand_tracking API from the UltraLeap API layer, before processing this data and exposing it via the controller input API to the application:

Here again, the OpenXR runtime is not involved at all with this process.

I am not 100% sure that everything I described above is 100% accurate (esp. the SteamVR and Leap bit), but I think it should be close. I hope it helps understand the reason why integrating the UltraLeap API layer cannot be done in the OpenXR runtime. I also hope it helps any developer out there to get started on investigating OpenXR API layers and how to create this emulation shim.